Google might have joined the generative AI chatbot party late, but the U.S. tech behemoth is now going all-in on artificial intelligence. The company announced a slew of AI integrations across all of its core products including Search and Gmail, at an annual Google developers conference in Silicon Valley on May 10. Those updates are also coming to Bard.

Bard is a conversational AI chatbot developed by Google, based on the LaMDA family of large language models. Initially launched in March, the bot competes directly with OpenAI’s more popular free-to-use chatbot ChatGPT, which was released just over six months ago.

Also read: Google’s Bard Cannibalized ChatGPT Data Claims Outgoing Whistleblower

Google opens Bard to public

Google CEO Sundar Pichai revealed at the conference that Bard is being opened to users in 180 countries for free. “We have been applying AI for a while, with generative AI we are taking the next step,” he told thousands of developers gathered for the event.

“We are reimagining all our core products, including search,” said Pichai, as AFP reported. By removing the waitlist for Bard, Google effectively expanded access to the chatbot to people outside of the U.S. and UK, where it was under trial in English over the last few months.

Officials said Bard will be modified to support 40 languages in the months to come. We gave the new updated Bard a go. Here are seven things that Google’s AI chatbot can do for free that are impossible with the free version of its Microsoft-backed rival ChatGPT.

Internet search

After an underwhelming initial launch, Bard is not ignorant of its own limitations. So, it greets you with a disclaimer: “Bard may give inaccurate or inappropriate responses,” the chatbot says. “When in doubt, use the ‘Google it’ button to check Bard’s responses.”

And then it immediately begged us to “please rate responses and flag anything that may be offensive or unsafe.” Point taken. The bot says its latest updates are experimental and there’s an almost clear sign-post to this effect on the top left of your screen on Bard’s chat page.

One key advantage of using Bard is that it is up-to-date. While ChatGPT is not connected to the Internet and does not provide search capabilities to the general public, Bard already has access to Internet search and is connected in real time.

It means Bard can provide more recent responses to user queries. By comparison, ChatGPT is trained on data that ends in Sept. 2021, meaning it can only offer answers up to that date. In other words, ChatGPT’s responses are outdated. It misses on two years of knowledge.

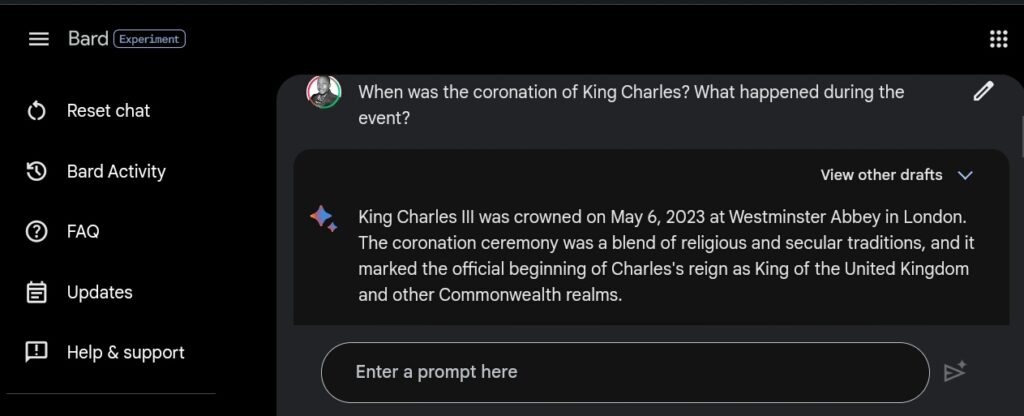

For example, we asked Bard: “When was the coronation of King Charles? What happened during the event?” It responded with the correct up-to-date information that “King Charles III was crowned on May 6, 2023 at Westminster Abbey in London.” (See picture below)

Bard gave a detailed response about the event, describing the procession, Church service, and how “Charles was anointed with holy oil”.

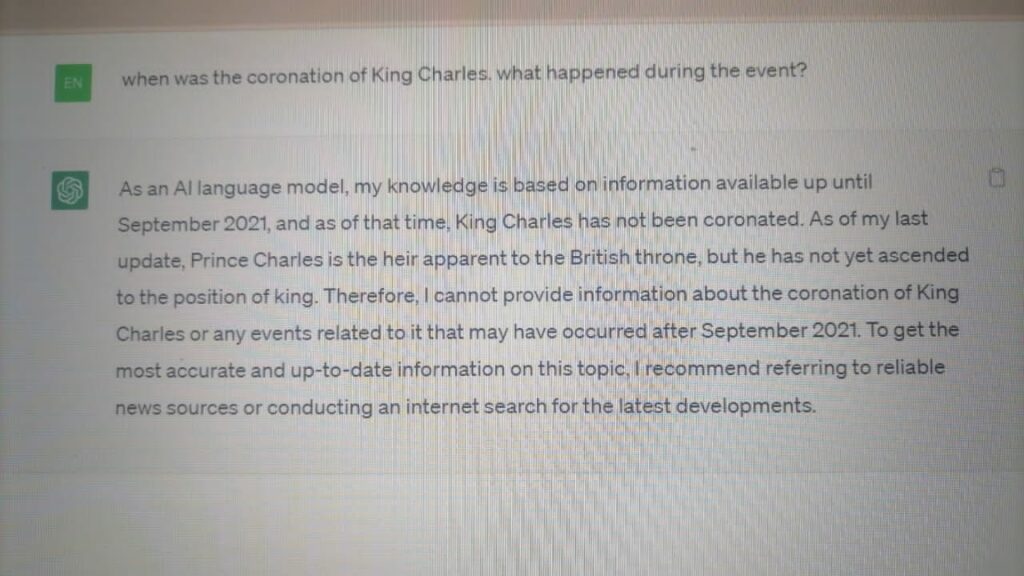

We also prompted ChatGPT with the same query, to which the bot replied it was unable to offer an accurate response. “As of my last update, Prince Charles is the heir apparent to the British throne, but he has not yet ascended to the position of king,” it stated.

Instead, ChatGPT suggested we refer to “reliable news sources or conducting an Internet search on the latest developments.” That’s because it did not have the “most accurate and up-to-date information on this topic.”

See searches related to your prompt

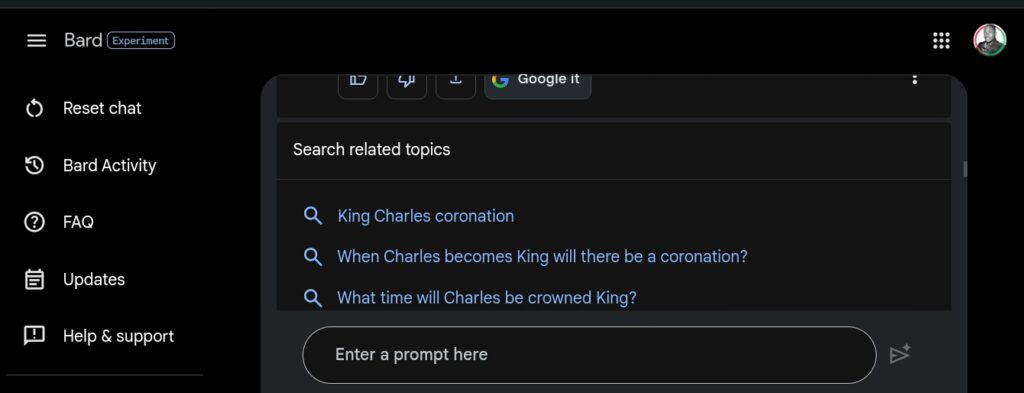

Remember that “Google it” button? We put it to the test. Whenever you are not satisfied with a result from Bard, the chatbot bids you to verify its responses by checking directly with the more reliable and accurate Google search engine.

So once Bard claimed Charles’ coronation happened on May 6, 2023, we clicked the button to see whether Google backed up the claim and the events that took place during the ceremony. It did. The bot even provided three options for related searches on the topic. Unlike ChatGPT, this feature allows you to check the sources fast and in real time.

Summarizing web pages

Since Bard is connected to the Internet, you can ask the chatbot any question about any website. This gives Bard an edge over offline ChatGPT, making the former a very useful tool for making “summaries or understanding complex subjects,” as tech educator Paul says.

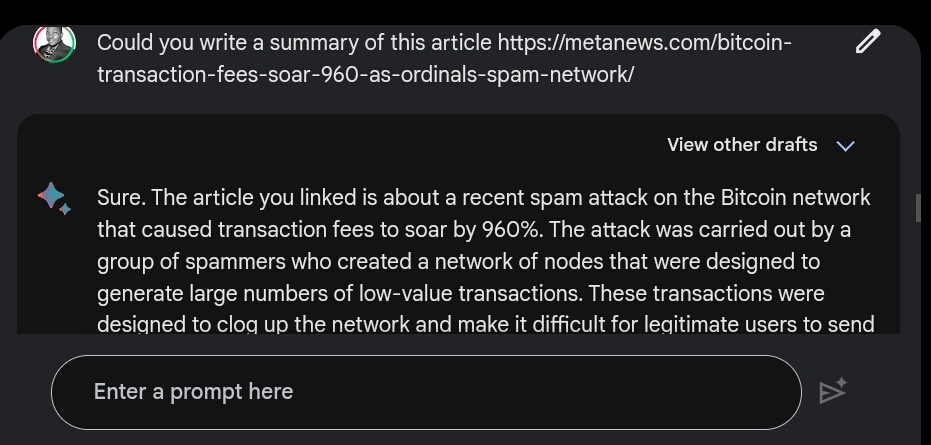

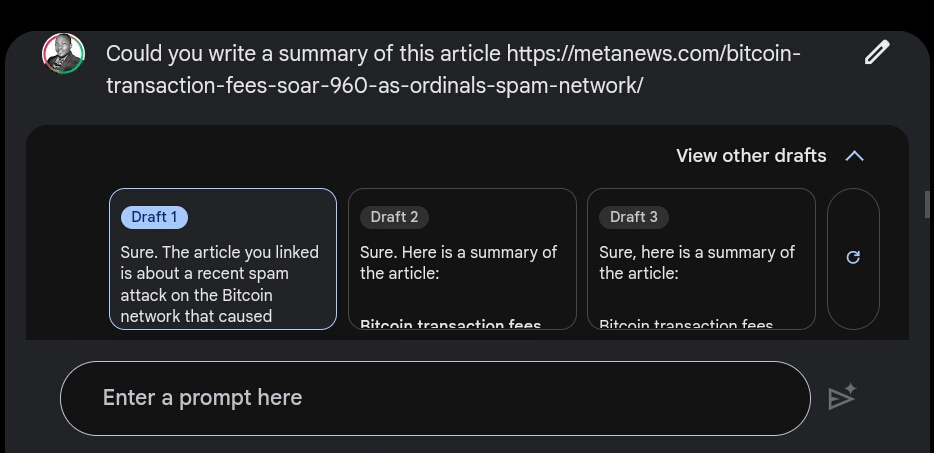

For example, we prompted Bard to write a summary of an article we recently published here at MetaNews by simply providing a web link to the post. The article detailed the soaring cost of sending a transaction over the Bitcoin blockchain due to increased Ordinals activity.

Bard returned a clear and precise summary of the post. However, we also noticed an attempt by the chatbot to exaggerate the circumstances by suggesting that those users minting NFTs on Bitcoin deliberately created low-value transactions in order to clog the network.

While there’s been some suggestion in the Bitcoin community that the current congestion is the result of a planned attack, we did not mention this in our original article.

Bard provides multiple drafts of its answers

When you query Bard, the AI chatbot automatically generates three versions of the same answer. Users have the option to choose a version of the responses they like, if the proposed one does not meet their expectations. As seen in the picture below, we got three possible answers to our prompt on rising Bitcoin transaction fees.

Export generated text

Unlike ChatGPT, Bard gives you the option to directly export a response either by email or via Google Docs. Click the export button next to the “Google it” button.

You can talk to Bard

As illustrated by Paul, the tech and AI educator, you can talk to Bard instead of writing to it. This is very helpful for those not keen on spending time on making written prompts.

2. Voice input

You can talk to Bard instead of writing to it.

It will save you a lot of time. pic.twitter.com/ZuS0OHJfw9

— Paul Couvert (@itsPaulAi) May 11, 2023

Explain code

Bard is capable of explaining code that you provide through a link. “It’ll be able to explain what it’s for and how it works,” Paul says.

6. Explain code

Give a link to Bard and ask it a question about a file.

It'll be able to explain what it's for and how it works.

Prompt → Can you explain what is the file agent py in this repo? (your link) pic.twitter.com/r0BAro9lh9

— Paul Couvert (@itsPaulAi) May 11, 2023

Google’s improvements to Bard came a week after competitor Microsoft allowed everyone access to its generative AI programs, which are powered by models made by OpenAI.

and then

and then