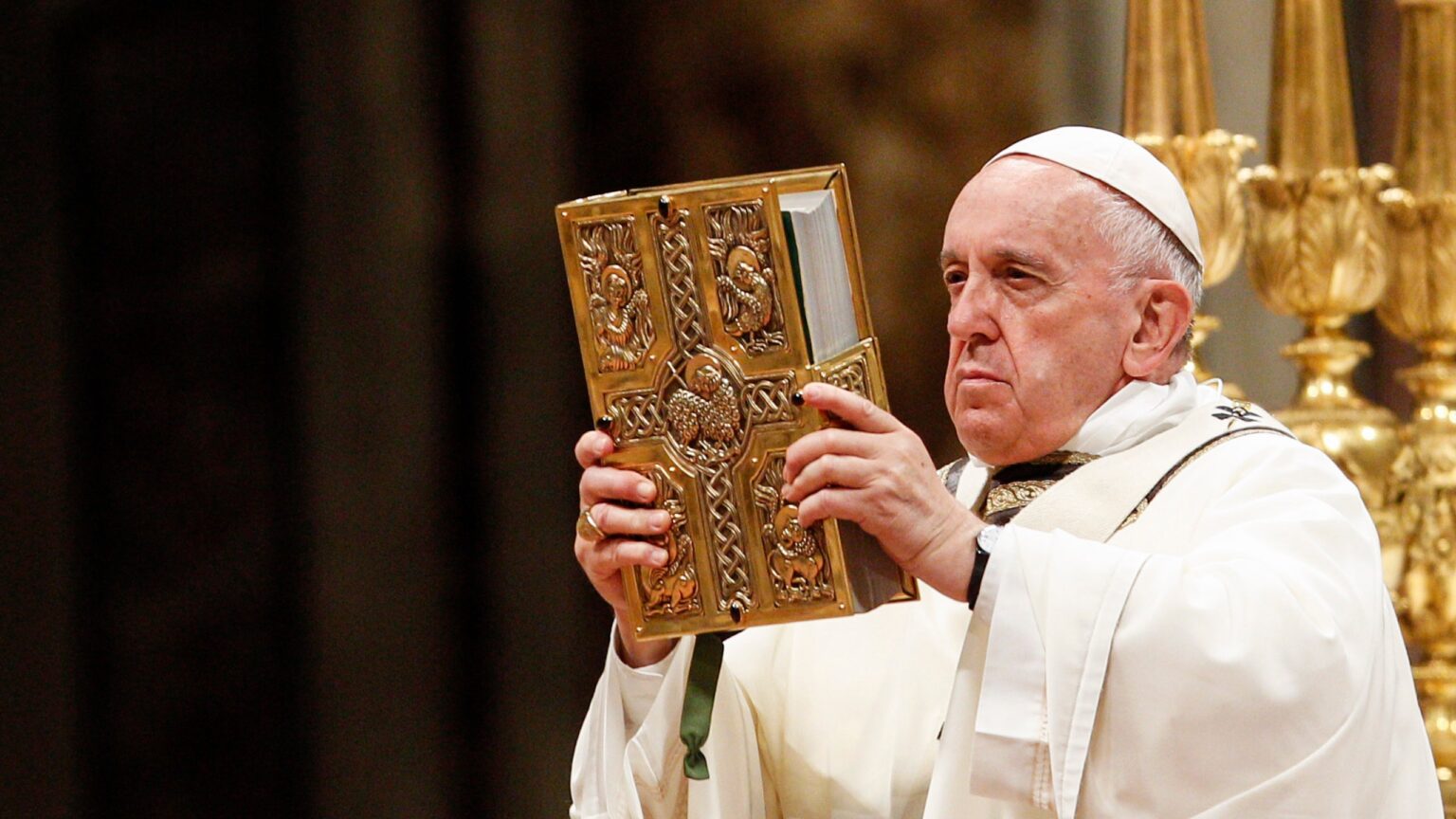

Pope Francis released a handbook on the ethics of artificial intelligence through the Vatican. He outlined a set of principles that technology companies such as ChatGPT creator OpenAI, should follow as they develop and deploy AI programs.

The handbook is titled “Ethics in the Age of Disruptive Technologies: An Operational Roadmap.” It was developed by the Vatican’s new Institute for Technology, Ethics, and Culture (ITEC), working with Santa Clara University’s Markkula Center for Applied Ethics.

The guidelines argue that AI should be used for the common good of humanity and the environment. They also call for artificial intelligence systems to be transparent, accountable, and safe – apparently in order to help avoid an AI-fueled apocalypse.

Also read: OpenAI Secretly Pushed to Weaken AI Rules in Europe

Unlikely Source for an AI framework

Despite their seemingly unrelated backgrounds, Pope Francis and his colleagues are uniquely qualified to offer their insights on AI. Father Brendan McGuire, pastor of St. Simon Parish in California, believes the Vatican’s initiative aligns with the church’s longstanding interests.

“The Pope has always had a large view of the world and of humanity, and he believes that technology is a good thing. But as we develop it, it comes time to ask the deeper questions,” said Father McGuire, who is also an advisor to ITEC, as Gizmodo reported.

“Technology executives from all over Silicon Valley have been coming to me for years and saying, ‘You need to help us, there’s a lot of stuff on the horizon and we aren’t ready.’ The idea was to use the Vatican’s convening power to bring executives from the entire world together.”

Pope Francis’ handbook aims to guide the tech industry through the ethical dilemmas in AI. It sets out one main principle around which firms can build their values, “ensuring that our actions are for the common good of humanity and the environment.”

The principle is split into seven guidelines, including “Respect for Human Dignity and Rights”, and “Promote Transparency and Explainability.” These guidelines are further divided into 46 specific, actionable steps, each with a definition, example, and implementation strategy.

For example, the principle that speaks to issues of human dignity and rights includes a focus on “not collecting more data than necessary.” It says “collected data should be stored in a manner that optimises the protection of privacy and confidentiality.”

"Artificial intelligence and other digital technologies have always served as a double-edged sword."#IACS Trustee @kballarta joined @CardinalTurkson at the Vatican for discussions on the ethical concerns surrounding emerging technology.https://t.co/dZjc7q59FV#AI #Ethics pic.twitter.com/x9UdW1fxAw

— Institute for Advanced Catholic Studies at USC (@IACSUSC) June 27, 2023

Fast-tracking the rules

The handbook states that AI companies should go beyond legal requirements and focus on protecting medical and financial data, and fulfilling their responsibilities to users. AI chatbots like ChatGPT are already under pressure over allegations of illegally haversting user data.

“The goal is to actually empower the people inside the company as people are going about their everyday work, whether it’s writing a code or a technical manual, or thinking about issues around workplace culture,” Gregg Skeet, one of the handbook’s authors, told Gizmodo.

“We’ve tried to write in the language of business and engineers so the reserves will actually get used and they’re similar to things and standards they’ve seen before.”

In March, Italian regulators banned the use of ChatGPT for privacy-related reasons. Earlier this year, leaders of the so-called G7 nations met in Japan and called for the development of technical standards to keep AI “trustworthy.”

Many others are worried AI could lead to the end of the human race. Hundreds of experts recently signed a 22-word letter calling for world leaders to prioritize “mitigating the risk of extinction from AI,” just like pandemics and nuclear war.

The Vatican hopes that its handbook will give companies something to work with as regulators around the world fine tune their own rules on AI.

“Major guardrails are absolutely necessary, and countries and governments will implement them in time,” said Father McGuire.

“But this book plays a significant role in fast-tracking the approach to design and consumer implementation. That’s where we’re trying to enable companies to meet the standards we need way ahead of time,” he added.

and then

and then